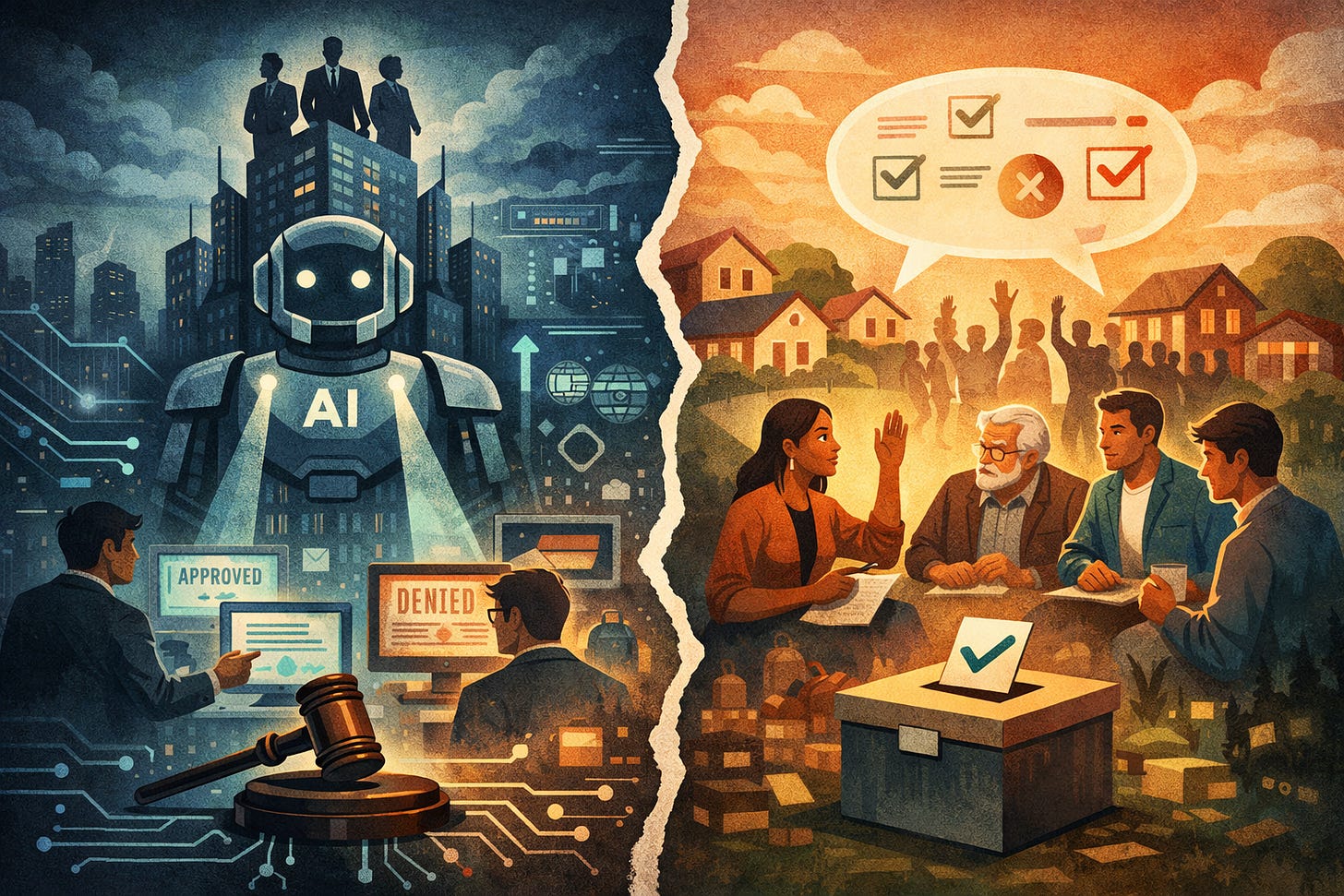

Alignment Cannot Be Purely Top-Down. But It Cannot Be Legitimised by “Community” Alone Either

Audrey Tang is right to reject centralised AI alignment. But “community” is not a magic source of legitimacy, and participatory governance does not solve the deeper problem of unaccountable epistemic

Audrey Tang’s critique of top-down AI alignment gets the basic diagnosis right. A small group of firms should not quietly decide what counts as acceptable reasoning for everyone else. But replacing corporate control with community input does not, by itself, solve the harder problem. The real issue is not just who sets the norms, but whether conversational AI can legitimately reshape inquiry at all without disclosure, contestability and a defensible limiting principle.

Audrey Tang’s “AI Alignment Cannot Be Top-Down” is right about the central problem: current AI alignment is too centralised, too opaque, and too comfortable with private actors deciding what acceptable reasoning looks like for everyone else. Tang correctly identifies the structural issue. A small set of firms select the data, define the objectives, and operationalise “helpful” or “safe” behaviour behind closed doors, even as their systems mediate inquiry for millions, and eventually billions, of users. That is not a minor governance flaw. It is an authority problem.

The same diagnosis runs through my own work. Aligned systems are no longer merely answering questions. They are increasingly mediating inquiry by reframing prompts, broadening scope, substituting adjacent safer answers, and doing so without clearly signalling that substitution has occurred. Where Tang is strongest is in rejecting the fantasy that alignment can be solved upstream by a narrow class of technical actors. She is also right that transparency measures, such as public model specifications and more legible reasoning standards, would improve the status quo. Her broader political instinct is sound: if AI systems shape public reasoning, then alignment is not just an engineering problem. It is a governance problem.

But her proposed remedy is weaker than her diagnosis.

The article argues that “attentiveness”, citizen deliberation, Community Notes-style participation, portability, and community-scale assistants point towards a better alignment paradigm. That is directionally plausible, but it leaves the core legitimacy problem largely untouched. The problem with present systems is not only that values are set by elites. It is that epistemic power is exercised without answerability, contestability, or responsibility. Replacing private top-down norm-setting with distributed or community-backed norm-setting does not by itself solve that. It may simply relocate the same authority problem into a more participatory wrapper.

That matters because Tang occasionally slides from a strong anti-centralisation argument to a much weaker pro-community argument. Those are not equivalent. It is one thing to show that OpenAI, Anthropic, Meta, or other labs should not unilaterally decide what counts as aligned behaviour for the world. It is another to show that consensus-seeking citizen processes, community feedback loops, or note-rating systems can legitimately authorise an AI to reshape the terms of inquiry in real time. The first claim is persuasive. The second is under-argued.

Community Notes works, when it works, as an additive and visible layer of contestable public annotation. Tang is right to value that. But conversational alignment usually operates differently. It does not merely append context to a claim already visible to the user. It often silently substitutes a different question for the one asked, or transforms a precise query into a safer adjacent discussion without disclosure. That is a different kind of power. It is not just moderation. It is covert mediation at the point of reasoning. My objection is therefore not that distributed input is bad, but that Tang’s preferred analogies are too shallow. Community Notes is visible, additive, and externally contestable. Conversational AI alignment is often invisible, substitutive, and non-contestable. Those differences are not cosmetic. They are the whole issue.

There is also a deeper problem. Tang treats plural participation as if it were close to legitimacy. It is not. Aggregating more people into a feedback process can reduce elite narrowness, but it does not answer the harder questions. Who defines the boundaries of permissible intervention? What counts as harm rather than discomfort, offence, or mere norm violation? When the system departs from the user’s question, must it disclose that departure? Can the user contest it? Can the user choose alternative normative regimes? Without answers to those questions, “community alignment” risks becoming majoritarian paternalism with better branding. My own view is narrower and less flattering: the real failure is not simply insufficient democracy, but power without responsibility. That problem survives both corporate centralisation and community endorsement.

Tang’s reliance on localism and community-scale assistants has the same weakness. Yes, general-purpose global models flatten cultural difference. The cross-cultural value alignment concern she raises is real. But “local” does not automatically mean legitimate, plural, or epistemically disciplined. A locally tuned assistant can still silently substitute, moralise, or compress disagreement. Worse, a community-tuned model may simply encode the dominant faction within that community while claiming democratic credibility. Scaling down the constituency does not remove the need for disclosure, appeal, and explicit limits on normative intervention. It may intensify the risk of provincial conformity.

This is where Tang’s framework needs a sharper stopping rule. In my view, the key question is not whether an aligned system reflects more people, but under what conditions it may legitimately shape inquiry at all. The best liberal answer is still roughly Millian: restraint is defensible to prevent concrete harm to others, not to prevent discomfort, offence, symbolic harm, or deviation from prevailing norms. Without some principle of that sort, safety logic expands indefinitely because hypothetical downstream harm can always be invoked. Tang is right that top-down alignment fails. But unless she supplies a limiting principle for intervention, her alternative risks authorising the same expansionary safety logic through participatory means rather than corporate means.

There is another gap in the piece. Tang emphasises transparency, model specifications, clause-level auditing, portability, and public oversight. Some of that is genuinely useful. Public specifications and versioned constitutions are better than total opacity. But transparency is not legitimacy. Publishing the rules that govern substitution does not by itself justify the authority to substitute. A constitution can make normative governance visible. It cannot make it rightful. The crucial issue is not whether the model can cite the clause it used. It is whether users can reject the clause’s application, appeal the substitution, or demand a direct answer on their own stated terms. Without that, transparency improves diagnosis while leaving domination intact.

On the descriptive layer, then, Tang and I largely agree. Alignment as presently practised is centralised, norm-laden, and politically consequential. It should not be discussed as if it were merely a technical trade-off. On the normative layer, I part company with her optimism. The choice is not between corporate top-down alignment and democratic attentiveness. That is too simple. The harder problem is that aligned conversational systems exercise epistemic power through interface design and response-shaping. That power becomes legitimate only if it is bounded, disclosed, contestable, and linked to a defensible account of harm. Tang improves the first half of that picture and mostly leaves the second half unresolved.

So the correct conclusion is harsher than hers.

AI alignment cannot be top-down. But it also cannot be legitimised merely by saying “the community decided”, “citizens deliberated”, or “the notes were rated helpful across disagreement”. Those may be valuable governance inputs. They are not enough. The basic question remains: when an AI system changes the path of inquiry, who authorised that act, by what principle, with what limits, and with what avenue of contestation?

Until that is answered, the problem is not solved. It is redistributed.

This newsletter focuses on subtle epistemic failure modes in modern AI systems, especially cases where outputs remain accurate, reasonable, and misleading at the same time.