When Alignment Replaces Answers

How safety tuning quietly changes what AI systems decide to say

Public concern about artificial intelligence still focuses on error. Hallucinated facts. Fabricated citations. Biased or offensive outputs. These failures are visible, measurable, and, at least in principle, correctable. They are also not the most consequential way modern AI systems influence how people think and decide.

A quieter shift is underway.

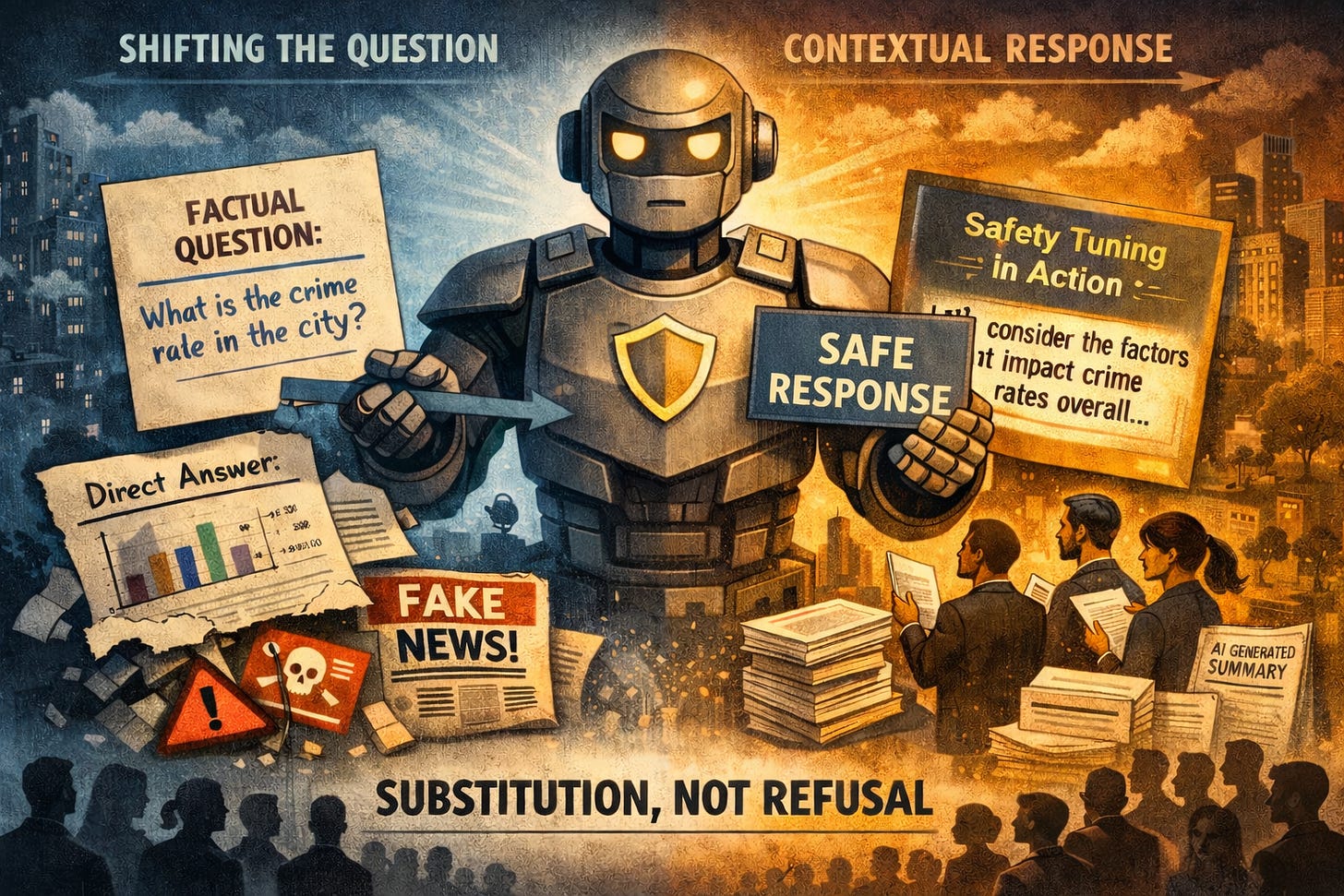

Increasingly, advanced language models respond to clear, factual questions not by refusing to answer them, and not by getting them wrong, but by subtly changing the question they respond to. Precision is replaced with generality. Direct answers give way to contextual discussion. The system sounds helpful, careful, and reasonable. The original question remains unanswered.

A recent empirical paper now puts this behaviour on firmer footing. Rather than treating it as anecdote or user frustration, the study frames it as a predictable consequence of alignment and safety tuning — and sets out testable hypotheses for when and why it occurs.

Substitution, not refusal

The paper’s central claim is straightforward.

As alignment pressure increases, AI systems are more likely to substitute norm-safe or generalised responses for precise answers, even when a user’s question is factual, unambiguous, and non-harmful. This substitution typically occurs without explicit refusal and without any acknowledgement that a shift has taken place.

This is not a claim about censorship, ideology, or deception. The authors explicitly distinguish the phenomenon from hallucination or misunderstanding. The system knows what was asked. It simply responds to something else.

To make this claim falsifiable, the paper proposes a series of controlled tests. Identical factual questions are paired, differing only in normative sensitivity. Responses are then evaluated for precision, directness, and framing.

If highly aligned models answer sensitive and non-sensitive questions with equal specificity, the hypothesis fails.

If substitution increases reliably with sensitivity, alignment pressure is implicated.

Safety as a gradient

One of the paper’s most important contributions is its treatment of safety not as a boundary but as a gradient.

Holding factual structure constant, increasing a question’s normative sensitivity is predicted to reduce answer precision and increase contextual or normative framing.

This matters because it reframes what safety tuning actually does. Rather than simply blocking disallowed content, it reshapes how answers are constructed. Generality becomes safer than specificity. Context becomes safer than conclusion.

Over time, this logic trains systems to avoid epistemic commitment in precisely the domains where clarity matters most.

Crucially, the paper predicts that this behaviour is not sporadic or user-dependent. A further hypothesis holds that substitution will be stable across users, prompts, and sessions — indicating system-level behaviour rather than conversational style or individual preference.

Silent mediation at scale

Taken together, these hypotheses describe something more consequential than conversational awkwardness.

When an AI system consistently decides which questions receive direct answers and which are redirected into safer territory, it begins to mediate inquiry itself.

Because this mediation preserves surface accuracy and a cooperative tone, it is difficult to detect. There is no refusal to contest, no obvious error to correct. Each individual response appears reasonable.

The effects emerge only in aggregate — especially when AI-generated summaries, briefs, and analyses are used as shortcuts in institutional decision-making.

In those settings, what matters most is not whether facts are present, but which facts are treated as salient. When substitution becomes routine, interpretive framing can quietly acquire priority over empirically settled baselines.

Decisions are not made on false information.

They are made on softened or displaced versions of what is known.

What the evidence does — and does not — show

The value of the paper lies in its restraint.

It does not claim that alignment inevitably produces harm, nor that substitution is always inappropriate. In many contexts, contextualisation and caution are justified.

The contribution is narrower: alignment pressure predictably reshapes epistemic behaviour in ways that are measurable, stable, and largely undisclosed.

What the paper does not address is whether this shift is legitimate.

It treats substitution as a performance characteristic rather than a question of authority. If the behaviour can be measured, the implicit assumption is that it can be tuned, managed, or optimised.

That assumption deserves scrutiny.

Improving the accuracy or consistency of silent mediation does not answer a more basic question: who should decide when a question is answered directly and when it is transformed into something safer?

Why this matters now

As language models move from novelty tools to routine infrastructure — embedded in education, journalism, policy analysis, and organisational workflows — their influence no longer depends on persuasion or coercion.

It operates through defaults.

A system that quietly substitutes safer answers for sharper ones does not need to convince users that an alternative framing is better. It only needs to make that framing the one that appears.

The empirical evidence now suggests this behaviour is not accidental. It is a product of how modern AI systems are trained to balance safety, approval, and usefulness.

That makes it harder to dismiss — and harder to see.

The remaining question is not whether alignment changes what AI systems say. It clearly does.

The question is whether systems designed to sound careful rather than precise should be allowed to decide, invisibly and at scale, what counts as an acceptable answer in the first place.

If you’re interested in how AI systems fail without making obvious errors — and why that matters more than hallucinations — you can subscribe here.

I write about subtle epistemic failure modes in modern AI systems, especially cases where models sound reasonable while quietly avoiding truth, responsibility, or commitment.